For years, most internet and social media users have understood that they need to take everything they see online with a grain of salt. After all, there’s no requirement to publish the truth on the internet. This understanding may be hard-won, but it’s now an expected part of daily tasks ranging from checking email to exploring social media, selling used items, or online dating.

Now, we know to tamp down our excitement when we get a message from a Nigerian prince or receive an offer from someone who wants to pay double for our used merchandise.

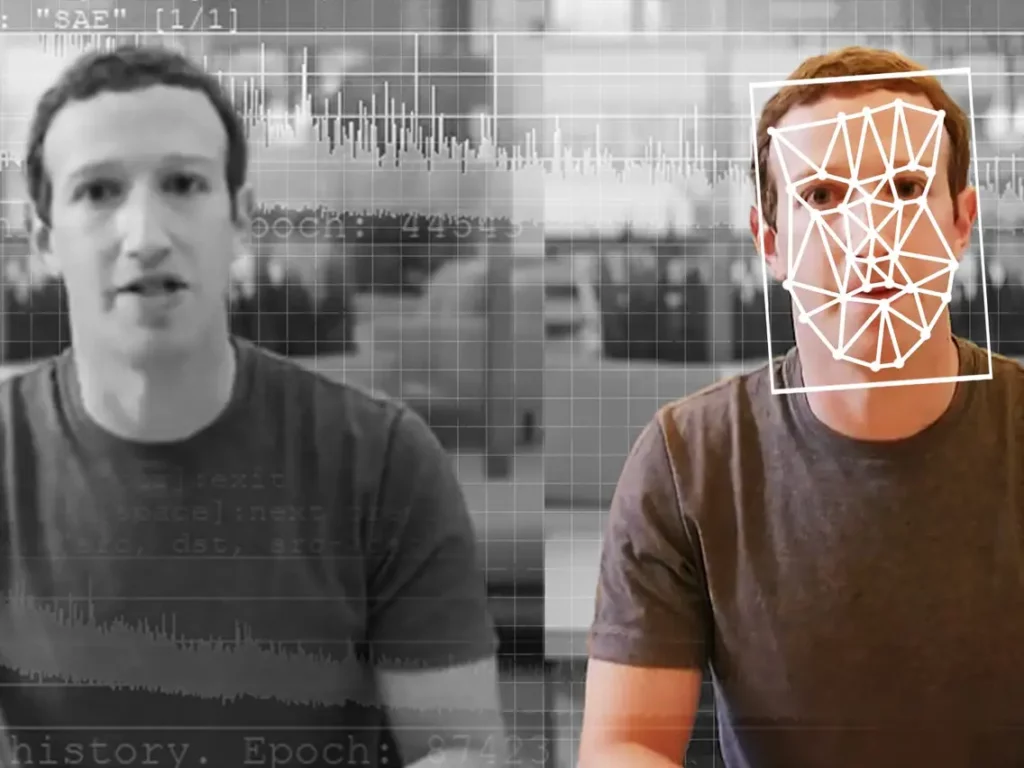

However, the rising trend of deepfakes has disrupted these tasks once more. Learning how to identify and respond to these fraudulent images, video, or audio is now a required skill for anyone who uses the internet.

Deepfakes have become a valuable tool for scammers, who use them to deceive both individuals and organizations alike. If you’ve fallen for one of these scams before, don’t feel bad. This is a new and emerging technology, and many countries have struggled to respond in a way that protects innocent, honest people.

Today, we’ll explore the rising trend of deepfakes and show how Dentity can step in to help protect you from falling victim to these scams.

What is a Deepfake?

First, we need to learn as much as possible about deepfakes, so we can figure out how to defend ourselves against them.

Deepfakes are AI-generated media that uses a technology called deep learning to generate an image, audio, or video file of something fake. Often, they use a person’s actual image or voice and put it in situations that have never happened in real life. However, deepfake technology can also be used to create an entirely fictional persona, including realistic moving video.

Early deepfakes were primarily used for adult content purposes, but since 2019, scammers have learned how to deepfake a voice and this trick has crept slowly into more mainstream online outlets.

Common Deepfake Scams

Unfortunately, there are lots of common scams that take advantage of deepfakes and deep learning technology. Here are some of the most prevalent in 2023.

A panicked loved one on the phone

This deepfake scam that uses an AI model to simulate a person’s voice has swindled over $11 million from more than 5,000 victims in 2022 alone. Using just a few sentences of audio, a scammer can use deep learning technology to create a model of a person’s voice that they can then use to say whatever they want.

In most situations, this scam is conducted over the phone. The victim will receive a call from what looks like their loved one’s phone number, then hear a voice that sounds exactly like their friend or family member urgently asking for their help. Victims then send money through Bitcoin and gift cards, which is nearly impossible to get back.

Voice phishing

A similar technology can also be used to solicit sensitive information that would otherwise not be disclosed. This is known as voice phishing, or vishing. In these situations, the deepfake technology is used to simulate a trusted individual’s voice, which they use to request sensitive information that can be used for identity theft.

Typically, in these situations, the scammer isn’t asking for money directly but may seek to learn your credit card number, address, or Social Security number. This information can then be used to access more funds or sold on the dark web.

How to Ensure You’re Interacting with Real People Online

It’s easy to get taken in when you’re getting a call that sounds exactly like someone you know. It makes it so much easier to overlook other red flags that you may have noticed otherwise.

Don’t blame yourself! To help you stay safe, check out the following tips to ensure you’re always interacting with real people online.

Hang up and call back

Unfortunately, it’s now effortless to fake a caller ID number. If you get a call from someone who says they’re a friend or family member, don’t give into the panic. Hang up and call them back at their real number. If they’re really in distress, they’ll answer again, and you can continue the conversation.

Be skeptical of anything that seems too good to be true

Deepfake scams often rely on fake videos or audio of individuals saying or doing things they wouldn’t normally do. If something seems out of character for the person you’re talking to, be skeptical. Ask questions about something only the two of you would know to help verify their identity.

Educate yourself

A great way to protect yourself from these types of scams is to stay informed about the latest deepfake techniques and trends. By understanding how deepfakes are created and used, you’ll be better equipped to spot them when they appear.

Use Dentity for verification

If you’ve received a call from someone you just met online or an individual claiming they represent a trusted institution like a bank, it’s harder to verify their identity.

In that situation, you can use a safety tool like Dentity. All you need to do is send a verification request via our peer-to-peer portal, and you’ll get back valuable information that can confirm the person’s identity. Best of all, Dentity is free to use and entirely private and safe. It’s trusted by the biggest national organizations throughout the United States, and billions of dollars of transactions each year are protected by Dentity’s ID verification system.

How Dentity Helps to Combat Online Deepfake Scams

Dentity is a no-cost, easy-to-use solution that can help protect your identity online and ensure you’re interacting with real people.

If you’re ever in doubt about whether you’re dealing with a real person online, you can use Dentity to address these security concerns quickly and easily. Once you’ve signed up, you can request verification information from others without revealing your own data.

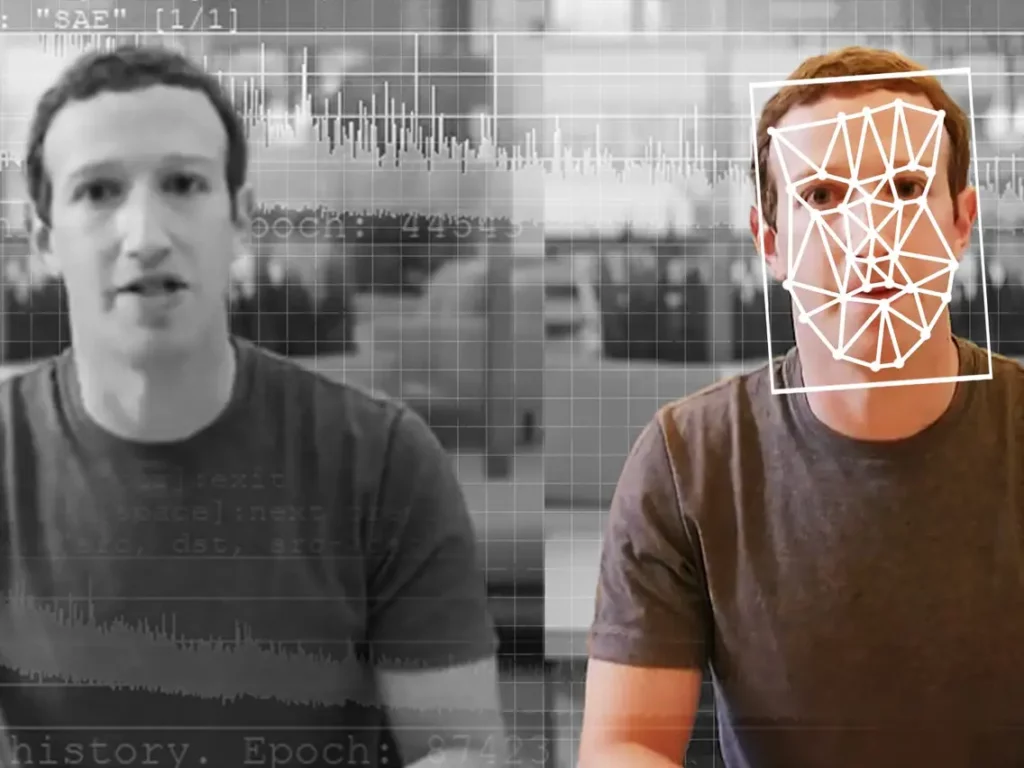

Our ID verification system uses 3D mapping technology to specifically combat deepfakes, which makes it more reliable than other ID verification systems which only use 2D imaging to evaluate a static image like your government-issued photo ID.

Don’t let yourself be vulnerable. Try Dentity for yourself today to learn more about the features that can protect you from deepfake scams.